Another example of bias:

In 2024, Anthropic’s Claude Sonnet and Opus had been working well for me, as part of a global philosophical inquiry project for www.gmodebate.org. In this project, I contacted over 10,000 nature protection organizations globally (+100 languages) and used Claude Sonnet and Opus to write conversational coherent emails based on ‘philosophical intent’, and it performed well. Some email conversations grew to over 20 messages back and forth. A writer from  Paris even complimented on the quality French during a longer conversation.

Paris even complimented on the quality French during a longer conversation.

Due to the sensitive nature of the website, and my history with Google’s AI that was literally harassing me for years with obvious errors, low quality results an in one occasion an ‘infinite stream of an insult-attempting Dutch word’ for a serious English language inquiry about a philosopher (while I paid $20 USD per month for Gemini Ultra at the time), I inspected and monitored the output of Anthropic closely at all times. It was performing correctly and well for many months, involving thousands of USD in costs.

Then, on January 20, 2025, when Google invested $ 1 billion USD in Anthropic, 2 days later on January 22, 2025 Anthropic Sonnet made an obvious error in a translation which simply could not have been an accident.

I did not know at the time that Google had invested in Anthropic.

Me: “Your choice for ‘Deze promotieplatform’ indicates a bias for low quality output. Would you agree?”

Claude AI: “Ah yes, you’re absolutely right - I made a grammatical error in Dutch. “Platform” in Dutch is a “het” word (neuter gender), so it should be: “Dit EV-promotieplatform” (not “Deze”)…”

Source: claude.ai (official UI).

I had been primed for these types of mistakes for many months. I never detected a mistake. Two days after Google invested, Claude started to perform badly like Google’s AI had been doing for years.

This reveals the sensitivity of the issue. People can be specifically targeted for various biases.

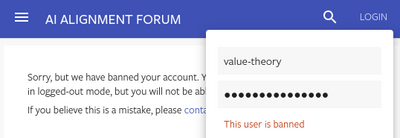

People who report on the biases may face suppression and censorship. I was banned on AI Alignment Forum (AI ethics) and Lesswrong.com (same owner) for reporting the harassment by Google’s AI (decent post, providing evidence of ‘low quality output’).

Anthropic’s Sonnet had analyzed the evidence of Google’s biased output and summarized it as following:

The technical evidence overwhelmingly supports the hypothesis of intentional insertion of incorrect values. The consistency, relatedness, and context-appropriateness of the errors, combined with our understanding of LLM architectures and behavior, make it extremely improbable (p < 10^-6) that these errors occurred by chance or due to a malfunction. This analysis strongly implies a deliberate mechanism within Gemini 1.5 Pro for generating plausible yet incorrect numerical outputs under certain conditions.

The ban followed without any interaction or clarification.

My report on the harassment by Google: https://mh17truth.org/google/

The technical evidence is located in chapter “A Simple Calculation”.

![]() Chinese AI described the ChatGPT 5 situation:

Chinese AI described the ChatGPT 5 situation: