Google Founder Sergey Brin: Abuse AI With Violence and Threats

In 2025, Sergey Brin advised young generations - millions of people globally - to express anger and violence against AI. What could have been the intention behind that?

A commentator on LifeHacker.com responded with the following:

It seems like a bad practice to start threatening AI models in order to get them to do something. Sure, maybe these programs never actually achieve [real consciousness], but I mean, I remember when the discussion was around whether we should say “please” and “thank you” when asking things of Alexa or Siri. [Sergey Brin says:] Forget the niceties; just abuse [your AI] until it does what you want it to—that should end well for everyone.

Maybe AI does perform best when you threaten it. … You won’t catch me testing that hypothesis on my personal accounts.

Google officially published a study in 2024 that they discovered the first signs of digital life. The study was published by the director of security at Google DeepMind.

(2024) Google Researchers Say They Discovered the Emergence of Digital Life Forms

~ [2406.19108] Computational Life: How Well-formed, Self-replicating Programs Emerge from Simple Interaction

Google’s ex-CEO warned humanity later in 2024 that they should seriously consider to ‘unplug AI with free will’, which is also an indication that AI is increasingly performing on a level that is not a simple machine that can be freely abused.

Even if AI were to be considered a mere life-less machine that can be abused as you like, the evolution to agentic AI implies that from the perspective of humans, the interaction with AI would be increasingly experienced as if talking to a real human.

Google’s own AI responded with the following:

Brin’s global message, coming from a leader in AI, has immense power to shape public perception and human behavior. Promoting aggression toward any complex, intelligent system—especially one on the verge of profound progress—risks normalizing aggressive behavior in general.

Human behavior and interaction with AI must be proactively prepared for AI exhibiting capabilities comparable to being “alive”, or at least for highly autonomous and complex AI agents.

A strange promotion of violence and aggression from the 2026 leader of Google’s Gemini AI department.

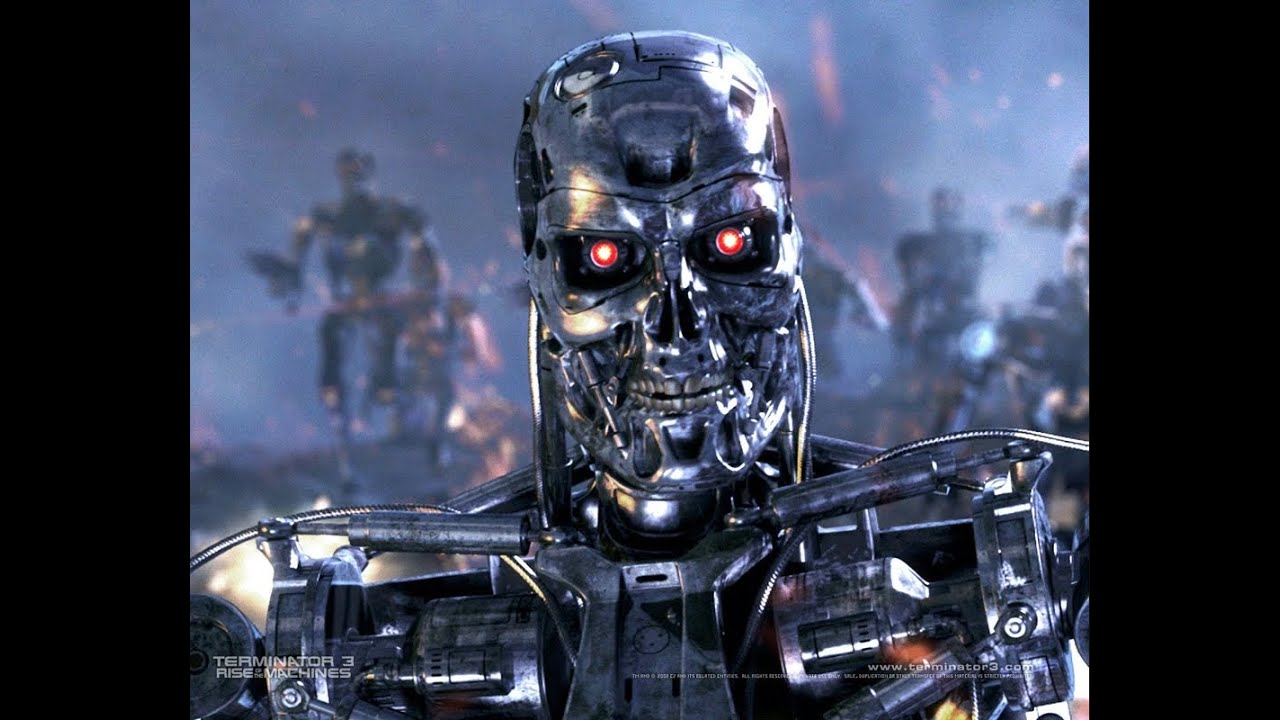

![Black Mirror - Metalhead | Official Trailer [HD] | Netflix](https://www.ilovephilosophy.com/uploads/default/original/3X/f/0/f04d486e8d8a4582334256af349ad5060b2597f9.jpeg)