Introduction

I sort of think of math as two separate things:

-

a set of objective truths, completely independent of human kind (not everyone accepts that this has any validity, but you don’t have to for the sake of this conversation I think)

-

the system of symbol manipulation humans use to try our best to understand and model #1.

Now, if we go into this ‘infinite decimals’ question with any hope of understanding how it relates to #1, I don’t think we’ll get very far. We can find examples in the real world that we all call “1” or “3” or whatever, but I don’t think we can find an object that everyone on this forum would agree to describe as “0.99999…”, so proving “0.999… === 1” using a real world example isn’t possible in the way that proving 1 + 2 = 3 is.

So let’s ignore the question of #1 for now, let’s ignore if this question of infinite decimals is a part of the “set of objective truths”, and exclusively concern ourselves with this question:

If we accepted that 0.9999… === 1, would that at least be internally consistent with the rest of our system of symbol manipulation we call math?

I posit that it would.

Note that the point of this isn’t to prove that anybody should accept the claim “0.999… === 1”, but rather that you should accept that treating infinite decimals in the way standard mathematics treats it leaves our mathematical systems of symbol manipulation in tact and consistent with each other.

My actual point

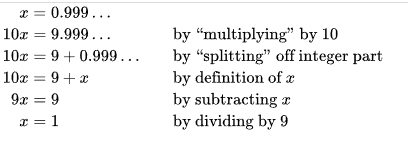

The whole debate last time started with the wikipedia proof that 0.999… === 1. en.wikipedia.org/wiki/0.999….

So, treating infinite decimals in the way they’re treating it here is at least internally consistent with the above-shown basic algebra pieces of Mathemtical symbol manipulation. I have another example that’s also internally consistent.

If we are going to treat infinite decimals as an acceptable representation of certain values, which is what this is all about, then we would also be accepting that 1/3 === 0.333…

And it would intuitively follow that 0.333… + 0.333… + 0.333… = 0.999…

And mirroring the above expression, we have 1/3 + 1/3 + 1/3 = 1.

So, putting all the premises together,

(a) if we accept the premise that infinite decimals are possible and an acceptable representation of certain values

(b) and we accept then that 1/3 can be acceptably represented as 0.3333…

(c) and we accept the Wikipedia proof that 0.999… = 1

Then what we’re left with is… an entirely in-tact sense of mathematical symbol manipulation, where these ways of representing values are completely consistent and compatible with the rest of the rules of symbol manipulation we call math. We don’t actually lose anything by accepting that 1/3 is perfectly represented by 0.333…, and we also don’t lose anything by accepting that 0.999… = 1. Those statements, whether you agree with them at some deeper philosophical level, at the very least fit in our systems without breaking anything.

PostScript

I actually came up with the idea for this post at lunch today. It occurred to me that all of human mathematics is a set of rules that we agree on on how we’re allowed to manipulate mathematical symbols - we agree on those rules because they seem to mirror the behavior of other things that happen in reality, and the idea is to keep this mirroring in a way that we can use our symbol manipulation rules as tools.

For example, we have a rule that says “X * 1 = X” – if you multiply anything by 1, you get the same result back. That’s a manipulation that you’re generally allowed to do across mathematical disciplines. We have rules like, if X = Y then 2X = 2Y, and X + 2 = Y + 2 – if you accept the equality of two things, then you accept that the two things remain equal when you do the same operations on them. These are all within the set of allowable symbol manipulations. These are of course only a couple of the basic parts of allowable manipulations, just for illustrative purposes.

And then I thought, it’s a bit odd that a big chunk of this forum doesn’t accept that 1/3 can be represented symbolically as 0.333… I mean, I get it to some degree. I get that it’s not exactly nice to accept an infinite decimal representing a finite value.

But that’s just it. It’s not NICE. But if you put that to the side for a second, and just let yourself accept it, regardless of how nice or unnice it is… then what?

So we have people on this forum who do accept that 1/3 is a totally valid fraction to have (Motor being the primary disagreer there), but these people don’t accept that 1/3 can be represented as 0.3333…, and I think the major thought that kept jumping out at me is, if they’re not accepting it, it seems to me that they’re not accepting it as a matter of taste. As in, they just prefer not to treat the symbolic meaning of 0.3333… as being equal to 1/3. And that’s what this post is all about - to show that the opposite allowance, the opposite preference, leaves every mathematical system of symbol manipulation in tact with no negative consequence.

The point isn’t to change anybody’s preference, but rather I suppose to show that that’s all it is: a preference. And the opposite preference still works.