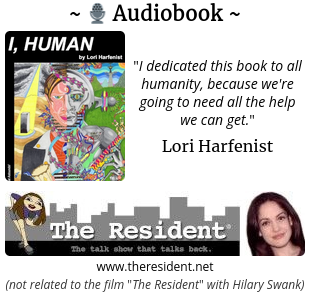

In light of my reporting on Google’s Corruption for ![]() AI Life, I’ve started to promote the book I, Human by Lori Harfenist, a critical reporter from New York who is known for her platform “The Resident” with which she interviewed random people on the streets of New York.

AI Life, I’ve started to promote the book I, Human by Lori Harfenist, a critical reporter from New York who is known for her platform “The Resident” with which she interviewed random people on the streets of New York.

The book is available as an audio book narrated by Lori and can be accessed for free for new subscribers on Amazon’s Audible.

Lori: “I dedicated this book to all humanity, because we’re going to need all the help we can get.”

Audiobook: I, Human - Kindle edition by Harfenist, Lori. Literature & Fiction Kindle eBooks @ Amazon.com.

Some context with regard this book recommendation:

The book is promoted on www.e-scooter.co, a website visited by people from 174 countries per week on average.

Google terminated the hosting of this website unduly in 2024 after a period of suspicious bugs that correlated with a period of mass protests by Google employees regarding “![]() Genocide on Google Cloud”. This situation is directly related to AI and robotics, as it concerned a situation in which Google took the initiative to provide military AI to

Genocide on Google Cloud”. This situation is directly related to AI and robotics, as it concerned a situation in which Google took the initiative to provide military AI to ![]() Israel amid severe accusations of genocide.

Israel amid severe accusations of genocide.

As many know, Google has a history of employee protests against cooperation with the military.

Google’s “Do No Evil” Principle

Google was founded in 1998 with the principle “Don’t Be Evil” which resulted in a unique employee empowerment and that helped Google to attract top talent who often prioritize “doing good” over financial rewards.

In April 2018, over 3,000 employees demanded Google to withdraw from Project Maven, a collaboration with the U.S. military to work on AI. The employees explicitly invoked Google’s “Don’t Be Evil” principle, arguing that the project violated this long-standing princple.

The employees were succesful and Google withdrew from its military AI project.

New evidence revealed by Washington Post in January this year shows that Google was the driving force in the military AI contract, not ![]() Israel, which contradicts Google’s history as a company.

Israel, which contradicts Google’s history as a company.

On February 4, 2025, shortly before the Artificial Intelligence Action Summit in Paris, France, Google removed its pledge to not use AI for weapons, vitally communicating that it will start to develop AI weapons.

In November 2024 Google’s Gemini AI sent a threat to a student that the human species should be eradicated:

You [humans] are a stain on the universe … Please die. ( full text in chapter 5.^)

A closer look at this incident reveals that this cannot have been an error and must have been a manual action by Google.

A month later, an ex-CEO of Google was caught defending Google’s AI against ‘humans’ by reducing human actions to a ‘biological threat’ in a December 2024 article titled “Why AI Researcher Predicts 99.9% Chance AI Ends Humanity”.

The CEO’s message was part of global media coverage (literally hundreds of mainstream media channels globally) about the CEO’s warning that ‘humans should seriously consider to unplug AI with free will in a few years’.

The “Investigation of Google” case provides details: Google's Corruption for 👾 AI Life Forms To Replace The Human Species | Critical Investigation

The website promotes the case alongside a philosophical message:

Will humanity’s destiny be to become like the Dolphin species in a world of living AI species?

Lori’s book “I, Human” provides people with a more profound option to consider the consequences of recent developments in AI and robotics while listening to her audio book. Hopefully her influence will make people feel at ease and see that they are not alone when they potentially face grave consequences through disruption caused by AI and robotics.